Use Agentic AI to generate smarter API tests. In minutes. Learn how >>

Jump to Section

Why People Hate Unit Testing and How to Bring Back the Love

Unit testing helps in assessing even the smallest components of applications. However, there has been a challenge over how unit testing can be made more efficient. Here, we uncover how Parasoft Jtest Unit Testing Assistant can help software testers overcome this challenge.

Jump to Section

Jump to Section

We created the Parasoft Jtest Unit Test Assistant to make unit testing more efficient because we know how important it is yet how time-consuming it can be.

It’s well established that unit testing is a core development best practice. At the same time, here at Parasoft, we hear many stories from customers about the insufficient unit test coverage of their code.

Improve Unit Testing for Java With Automation

So why the gap between best practice and reality?

Let’s look at some of the causes of low unit test coverage and how to get past these obstacles with software automation.

Why Do Unit Testing, Anyway?

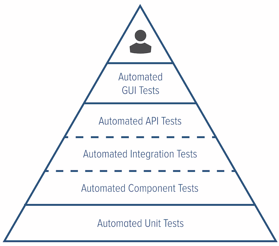

Most development teams will agree that unit testing is valuable. A good unit test suite provides a safety net to application development, enabling teams to accelerate Agile development while mitigating the risk of defects slipping into later stages of the pipeline.

I would go further and say that the process of creating a software unit test is a beneficial activity in and of itself, helping the developer to look at their code through a different lens, essentially doing an additional code review.

While writing a unit test, you review the interface to the functionality from an external point of view, benefiting from asking questions like below.

- How will my code be used? Asking this question can lead to a simplified interface and a lower cost of code maintenance.

- What happens if I get invalid data? This question can result in more robust and reusable code.

Our development teams at Parasoft have uncovered many issues in code under development while writing unit tests for that code.

Where Intentions of Great Unit Testing Break Down

Typically, development teams do a minimal amount of unit testing or skip it altogether. Often this is due to some combination of the following two situations.

- The pressure to deliver more and more functionality.

- The complexity and time-consuming nature of creating valuable unit tests.

Reasons for Limited Unit Testing Adoption

This breaks down into some common reasons developers cite that limit the adoption of unit testing as a core development practice.

- There’s a lot of manual coding involved. Sometimes even more than was required to implement a specific feature or enhancement.

- It’s difficult to understand, initialize, and/or isolate the dependencies of the unit under test.

- Defining appropriate assertions is time-consuming and often requires cycles of running and manually adjusting tests or performing intelligent guesswork.

- It’s just not that interesting. Developers don’t want to feel like testers, they want to spend time delivering more functionality.

Tools to Help With Unit Testing

There are several tools currently available that can help with unit testing.

- Unit testing and assertion frameworks provide standardized execution formats, such as JUnit, for seamless integration into CI infrastructure (Jenkins, Azure DevOps, Bamboo, TeamCity).

- IDEs help in the creation of test code ( IntelliJ, Eclipse).

- Mocking frameworks isolate the code from its dependencies ( Mockito).

- Code coverage tools provide some visibility into what code was executed (JaCoCo, Emma, Cobertura, Clover).

- Debuggers allow developers to monitor and inspect the step-by-step execution of an individual test.

Expensive Pain Points of Unit Testing

Although these tools are helpful, they don’t address the reasons why developers don’t do enough unit testing. Developers still find many pain points that make unit testing expensive, such as the following:

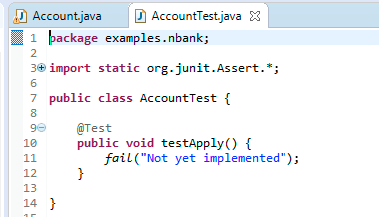

- The IDE helps with creating a skeleton for the unit test, but no content. The developer still needs to add lots of code to create an executing test.

- Mocking frameworks require a significant amount of manual coding to instantiate and configure, plus the knowledge of how to correctly use them.

- Assertions need to be manually defined and tests must be executed to see if the correct values have been asserted.

- Coverage tools provide insight into what code an executed test has covered, but they don’t provide any insight into the runtime behavior of the test.

- Debuggers can be used for an individual test but do not scale to monitor an entire test run.

In summary, the creation of a unit test still requires a lot of manual, time-consuming, often mind-numbing effort before you’ve even started with adding business logic to a test.

Increase Code Coverage & ROI With Java Unit Testing

The Solution? We Created an Assistant!

To build a tool to help you bypass these pain points, we turned to software testing automation (of course). Parasoft Jtest’s Unit Test Assistant (UTA) is available to help you create a fully functioning unit test at the click of a button.

Tests created with Parasoft Jtest are “regular” JUnits but with all the mundane work done for you. Parasoft Jtest sets up the test framework, instantiates objects, configures mocks for appropriate objects and method calls used by the method under test, and adds assertions for values that change in the tested objects. These JUnits can be executed as part of your standard CI workflow the same way as your existing tests.

Parasoft Jtest supports the following test creation workflows:

- Tool-assisted creation of unit tests for newly developed code.

- Bulk generation of unit tests for legacy code.

- Targeted generation of unit tests to cover specific uncovered code blocks.

Tool-Assisted Unit Test Creation for Newly Developed Code

Developers can choose to generate multiple test cases with object initialization and mocks fully configured to cover all branches in their method under test. Alternatively, if they want more control over the generated code, they can build up the test little by little using targeted Parasoft Jtest actions.

Parasoft Jtest’s Unit Test Assistant specializes in an assisted workflow by providing actions that do the following:

- Create test skeletons that call the tested method with all objects initialized.

- Identify method calls that can be mocked to better isolate the code under test, with quick fixes to generate the mocking code.

- Create assertions for object values that change during execution of the test and should be asserted.

- Find tests that do not clean up after themselves creating a potentially unstable test environment (due to the use of threads, external files, static fields, or system properties).

Bulk Unit Test Generation for Legacy Code

Many teams still maintain legacy codebases with lots of untested code. This becomes a business risk when changes need to be made to that code.

Parasoft Jtest allows a developer to generate test suites for entire projects, packages, and classes to quickly build a set of tests that provide high coverage of the legacy code. The tests can be optimized to produce the highest coverage with the minimal set of test cases or to produce slightly less coverage with a more stable and maintainable test suite.

Targeted Unit Test Generation to Cover Specific Uncovered Code Blocks

Often some tests exist for a codebase, but not enough tests to cover all conditions. The main flows are tested, but edge cases or error conditions are left untested.

Parasoft Jtest visually shows which blocks of code are tested and which are not and provides context-specific actions to create a test case that specifically covers a specified uncovered line of code. This action creates a test case that initializes all objects and mocks with specific values needed to force the test to execute the given line of code.

Reduce Unit Testing Time

We created Parasoft Jtest’s Unit Test Assistant to make unit testing more efficient because, as an organization that specializes in intelligent test automation, we know that unit testing is an essential step in creating software that’s safe, secure, reliable, and high quality.

Since we released Parasoft Jtest with the Unit Test Assistant, customers have told us that Parasoft Jtest reduces the amount of time it takes them to create and maintain unit tests by as much as 50%. I hope that you will give it a try and share your experience using Parasoft Jtest to significantly reduce the time it takes to create and maintain your unit tests.