Use Agentic AI to generate smarter API tests. In minutes. Learn how >>

Jump to Section

Record & Replay Testing: How to Move Beyond Record-and-Replay for Better Automated API Testing

Go beyond record and playback testing. Read on to discover how to capture traffic associated with API test scenarios during UI testing and create meaningful test scenarios with AI to increase scalability and efficiency.

Jump to Section

Jump to Section

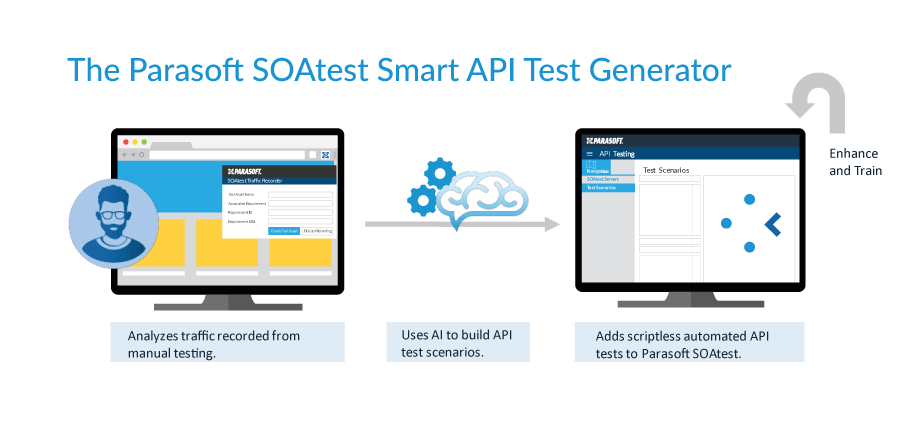

A popular API testing solution for functional, regression, integration, and backend testing, Parasoft SOAtest has a unique feature: Smart API Test Generator.

This groundbreaking technology uses AI to convert traffic captured during manual tests into automated API tests. You turn on recording and stop it by using a browser plugin. This scriptless workflow doesn’t require a large tool set. It also doesn’t require detailed knowledge of the application API layer.

Smart API Test Generator isn’t the typical UI record and playback technology. In this case, AI automatically captures and organizes API traffic that underlies the UI interactions into meaningful test scenarios. These API scenarios form the basis of API test suites that decouple the UI from functional testing.

What Is API Record & Replay Testing?

The concept of record and playback automation testing is fairly common among UI testing strategies. You record UI actions in the browser—typically with an automated testing tool—and then play back those actions as an automated UI test case. However, much like a film, where you need to edit raw footage for continuity and content, you need to configure recorded test cases for consistent and stable execution and extend them with automated validations and assertions.

UI changes are common for applications under development. You need to maintain tests, which is time consuming and can reduce the benefits of automation. However, although UI changes are common, the underlying API interactions are less likely to change frequently. API testing is a more efficient way to test backend application functionality without the burden of UI test maintenance.

Parasoft was the first to translate this strategy to API testing as a part of its comprehensive Continuous Quality Testing Platform. Today, developers and testers can generate vendorless Selenium test scripts using Parasoft Selenic when they want to validate UI behavior or the user experience on the front end. They can generate API tests when to focus on the functional validation of the underlying business logic or gain efficiency by generating tests at a lower level of scope.

Advantages of Record & Playback API Testing

UI testing can be brittle. It’s large in scope and takes more time to execute and maintain. API testing is fast, has fewer moving parts, and is more focused on specific business logic. API tests are often configured in a scriptless interface, making them more accessible to nontechnical people.

With all that in mind, manual tests are inherently difficult to translate into an API test. They require good domain knowledge of the underlying services, HTTP call structure, header and token parameterization, and so on. Parasoft SOAtest handles these things for you by looking at the recorded HTTP traffic and applying AI to create repeatable, stable API tests. If you want to reduce your reliance on UI testing, this is a great avenue to achieve that goal.

More advantages include:

- Reliable and repeatable tests are less prone to breakage due to UI changes.

- Tests focused on specific APIs lead to easier defect detection and remediation.

- Better application coverage with integrated tools within the Parasoft ecosystem.

- Test environment management, Virtualize

- Test automation orchestration, CTP

- Unit testing and code coverage, Jtest

- Reporting, DTP

Why Did Record & Playback Testing Get a Bad Reputation?

Traditional record and playback tools have their drawbacks. Depending on the application architecture, complexity, and release velocity users may find it difficult to implement this approach for a variety of reasons.

- Limited core functionality.

- Lack of record and edit features.

- Not suited for apps that undergo frequent changes, which can cause tests to break.

- Re-recording new tests for existing flows can be time-consuming and redundant.

- Enhancing test cases and implementing them as a part of a mature test automation framework still requires programming skills and knowledge.

These are all valid concerns, and this is where AI fits into API record and playback testing.

How Record & Playback Testing Has Gotten Better

Since its inception, this type of testing strategy has improved quite a bit thanks to AI. It can address problems such as brittle test cases and better automation overall to mitigate redundancy or knowledge gaps.

How AI Improves Record & Playback API Testing

AI for AI’s sake is meaningless. Why do we need to add artificial intelligence to API testing? Well, we need it because record and replay testing isn’t enough.

To scale API testing, it takes more than just collecting traffic, recording it, and playing it back. You need the ability to identify and organize captured API activity into meaningful, reusable, and extensible tests.

This is where artificial intelligence comes into play so that the traffic recording can not only take place but also be organized into recognizable scenarios or patterns of API usage that occur during UI use cases. This is why Parasoft developed the Smart API Test Generator. The AI-powered tool captures API traffic and coalesces the captured data into reusable and editable test scenarios in Parasoft SOAtest.

How Does Smart API Test Generator Work?

As you test the UI, the Parasoft Recorder monitors the underlying API calls that are made to your application, just like a traffic collector might, and then the Smart API Test Generator uses AI to discover patterns and understand relationships between those API calls. Next, it generates automated API test scenarios that perform the same actions as your UI tests but are fully automated and easily extendable.

Parasoft’s Smart API Test Generator consists of three components.

- Recorder. The Chrome browser plugin records traffic. It’s installed locally on a desktop and includes a user interface for connection setup to SOAtest and to begin and end recording.

- SOAtest Web Proxy. Handles HTTP and HTTPS traffic, storing it for later use by SOAtest.

- SOAtest. A complete functional, end-to-end testing solution that analyzes the traffic and translates it into API tests.

You can set up and execute tests by connecting the Smart API Test Generator to a SOAtest server locally or via a dedicated server. The Chrome browser extension lets you start and stop traffic recording, configure connections to the SOAtest server and the web proxy, and save the traffic as files.

These recordings are pushed to SOAtest, which is where the power of heuristics and AI comes in. The traffic recordings are organized into API tests that represent detected patterns within the traffic. The automatically generated API tests are the same as any other existing API tests, which are extensible, maintainable, and executable within SOAtest, and become part of a future or existing test suite.

Let’s Take It a Step Further

All of this is good in its own right. Even more, Parasoft’s API test generation is a fantastic way for developers and testers to understand the relationships between the UI actions and the API calls, thus improving API testing skills and knowledge of the application.

The Smart API Test Generator takes on the heavy lifting, giving you an easy, scriptless place to start building API tests. It lowers the technical entry point to API testing, bringing beginners into the API testing world via user-friendly test automation provided by Parasoft SOAtest. These visual tools are easy to adopt and use.

The test scenarios automatically created by SOAtest provide the building blocks for API test suites that are both human-readable and extensible. Testers can build their understanding of the APIs and the application functionality from these tests and extend and reuse them to build up an entire suite of tests.

Teams can reuse these API functional tests for nonfunctional testing needs, including load, performance, and security testing. This reduces any testers’ reliance on manual and UI-focused testing for much of the application functional testing.

Benefits of AI-Enhanced API Record & Playback Testing

Clearly, there are differences between traditional, UI-based, record and playback testing, and API, AI-augmented testing. Here are some of the benefits of API record and playback testing that Parasoft SOAtest provides.

- Reduces time spent determining the right way to build API tests by automatically converting the actions performed in your browser into automated API tests that model the same actions you performed in the right order.

- Makes it easier to build comprehensive API tests by automatically creating testing scenarios based on the relationships between the different API calls. Without this, you have to spend time investigating test cases, looking for patterns, and manually building the relationships to form each test scenario.

- Automatically adds assertions and validations to ensure your APIs work as intended. As a result, you can perform even the most complex types of assertion logic without having to write any code or risk building them wrong.

- Reduces the time spent maintaining tests. Because it’s scriptless, users don’t have to spend time rewriting code for test cases when a service changes. Built-in tools like the Change Advisor analyze API changes and construct a template to make it easy to implement updates.

- Helps development and test teams collaborate with a single artifact that’s easy for both teams to understand and share. This also helps diagnose the root cause of a defect better than a UI test.

- Lays the foundation for a scalable and efficient regression testing strategy by helping you extend test cases, test flow logic, and data solutions to accomplish the entire scope of functional test coverage needed to fully validate applications before releasing them into production.

The Smart API Test Generator in SOAtest works to automatically create test cases based on meaningful interpretation of the captured API activity, offering ease-of-use functionality and maintenance. By pushing functional testing down the test pyramid to smaller scope tests and reducing reliance on slow and brittle UI regression tests, development and test teams can achieve greater release velocity with improved testing productivity while ensuring high test coverage.