See what API testing solution came out on top in the GigaOm Radar Report. Get your free analyst report >>

See what API testing solution came out on top in the GigaOm Radar Report. Get your free analyst report >>

Parasoft Virtualize

Virtualize

OVERVIEW

Limited access to real data and live services in your test environment during software testing often leaves you constrained. Create virtual simulations that behave like the real thing with Parasoft service virtualization. Test any workflow, anytime.

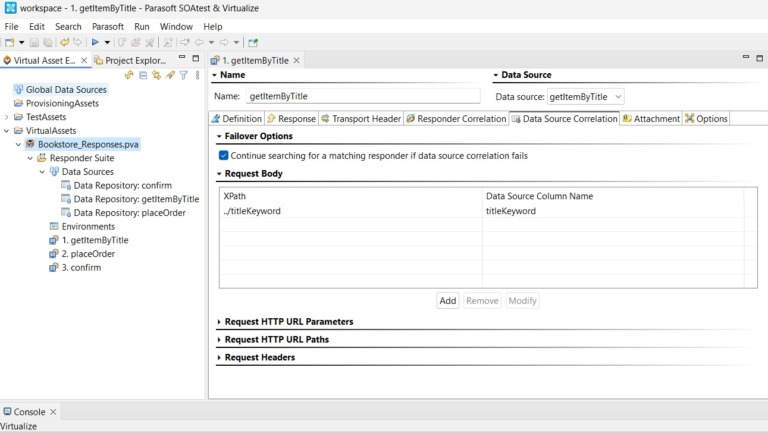

Create virtual services to handle any scenario with ease. Build reliable, predictable service simulations using broad support for over 120 industry-standard message formats and protocols. No scripting required.

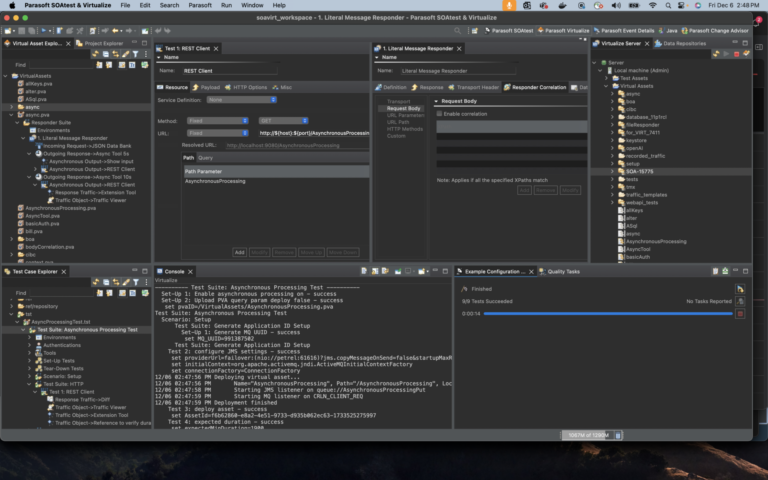

Confidently build environments that behave (and fail) like the real thing. Simulate services, model test data, and stabilize dependencies by utilizing live system behavior to build virtual test environments to clone, share, deploy, and destroy on demand.

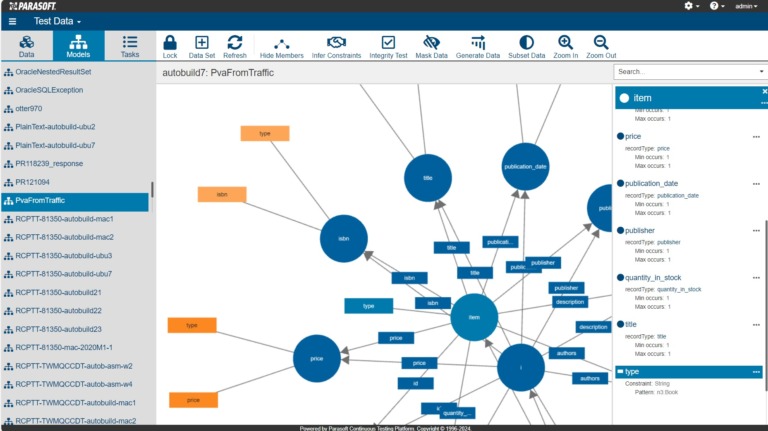

Testing gets delayed when the right test data isn’t available. It leads to inefficiency and missed deadlines. Eliminate bottlenecks and generate or mask diverse synthetic datasets with on-demand virtual test data. Enable comprehensive, reliable, secure testing without relying on production data.

Discover the potential ROI your organization could experience with Parasoft’s service virtualization solution.

PARASOFT VIRTUALIZE CAPABILITIES

Test any system, anytime. Simulate the behavior of dependent components in complex systems to enable realistic testing even when components are unavailable or difficult to access.

Accelerate development, reduce dependencies, and achieve continuous testing by eliminating constraints in test environments.

Navigate the challenges of unstable test environments and unavailable system dependencies with Virtualize. Streamline testing by simulating dependent services, minimizing delays and disruptions. Empower comprehensive testing to deliver high-quality software with confidence.

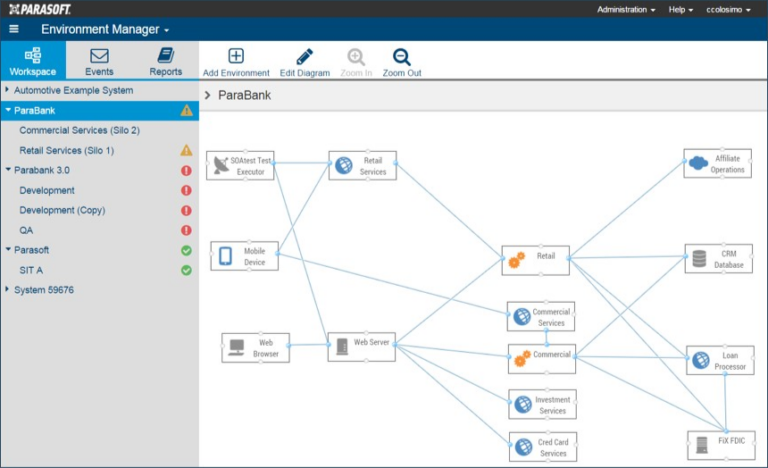

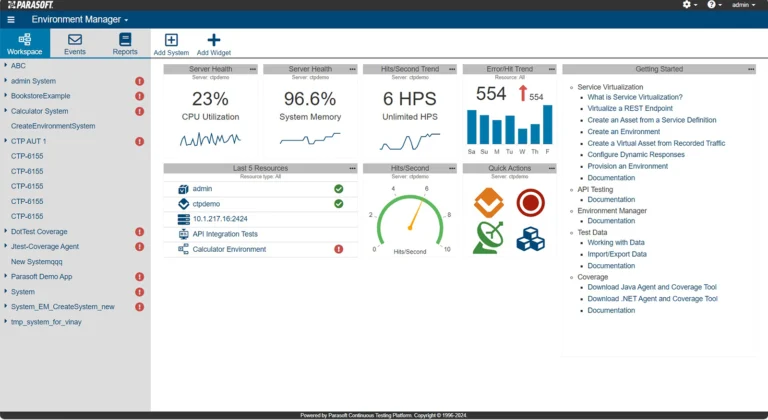

Easily manage test environments with our intuitive web interface. Deploy virtual test environments with dependent components configured as needed for testing scenarios. Decouple your application from external dependencies to stabilize test automation operations and enable continuous testing.

Avoid testing conflicts. Generate synthetic data or mask production data to eliminate issues caused by shared data sources. These can lead to unreliable regression testing and false failures. Guard against delays in test data provisioning with virtual data, which ensures reliable, efficient, and streamlined testing processes.

Optimize testing of your APIs. Use service virtualization in the same environment for full control over service dependencies. Streamline CI/CD workflows, test microservices in isolation, simplify test environment management, and deploy fully virtual test environments to enable continuous testing of API workflows.

Go beyond test results. Get insights into the health and utilization of your service virtualization infrastructure to optimize performance and demonstrate ROI. Customizable dashboards and detailed asset use data enable you to monitor real-time server performance and track virtual service usage.

Virtualize supports +120 message formats and protocols. It integrates seamlessly with popular build systems and CI infrastructures. Containerize or deploy it in the cloud for maximum flexibility.

Explore our deployment models to fit your team’s testing needs and maturity. Get started with the Virtualize free edition today!

INTEGRATIONS

Virtualize integrates with Parasoft CTP to enable environment provisioning with detailed reports for test environment health, monitoring, event reporting, virtualization utilization, and more.

Take control of your test environment. Stabilize your test automation with Parasoft Virtualize.